Deep Learning

This paper extend tools recently proposed in the nascent field of explainable artificial intelligence, such as Layerwise Relevance Propagation, to coarse-grained potentials based on graph neural networks.

This article explores the comparison between deterministic and stochastic dynamical classifiers in the context of opposing random adversarial attacks using noise. The study provides insights into how these different types of classifiers can be used to mitigate adversarial threats.

Finite-width one hidden layer networks display nontrivial output-output correlations that vanish in the lazy-training infinite-width limit. This manuscript rationalizes this evidence using kernel shape renormalization in the proportional limit of Bayesian deep learning.

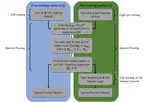

This study explores the teacher-student paradigm in machine learning, focusing on overparameterized student networks trained by fixed teacher networks. It introduces a new optimization scheme using spectral representation of linear information transfer between layers. This approach allows identifying a stable student substructure that mirrors the teacher’s complexity. The method shows that pruning unimportant nodes, based on optimized eigenvalues, does not degrade performance, indicating a second-order phase transition with universality traits in neural network training.

EODECAs, merging machine learning with dynamical systems, enhance interpretability and transparency in neural networks. They employ continuous ordinary differential equations, offering both high classification accuracy and an understanding of data processes, addressing the opacity of traditional deep learning models. This approach signifies a step towards more comprehensible machine learning models.

The Complex Recurrent Spectral Network (C-RSN) is a novel AI model that more accurately mimics biological neural processes using localized non-linearity, complex eigenvalues, and separated memory/input functionalities. It demonstrates dynamic, oscillatory behavior akin to biological cognition and effectively classifies data, as shown in tests with the MNIST dataset.

This study introduces a neural network-based method for nonparametric analysis of the Hubble diagram, extended to high redshifts. Validated using simulated data, the method aligns with a flat Λ (Lambda) cold dark matter model (ΩM ≈ 0.3) up to z ≈ 1-1.5, but deviates at higher redshifts. It also suggests increasing ΩM values with redshift, indicating potential dark energy evolution.

The Recurrent Spectral Network (RSN) is a new automated classification method that uses dynamical systems to direct data to specific targets, demonstrating effectiveness with both a simple model and a standard image processing dataset.

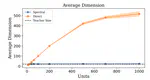

Training neural networks in spectral space focuses on optimizing eigenvalues and eigenvectors instead of individual weights, allowing effective implicit bias that node enables pruning without sacrificing performance.

We introduce a new method for training deep neural networks by focusing on the spectral space, rather than the traditional node space. It involves adjusting the eigenvalues and eigenvectors of transfer operators, offering improved performance over standard methods with an equivalent number of parameters.