Article-Journal

This research introduces a learning algorithm based on the Wilson-Cowan model for metapopulation, a neural mass network model that treats different subcortical regions of the brain as connected nodes. The model incorporates stable attractors into its dynamics, enabling it to solve various classification tasks. The algorithm is tested on datasets such as MNIST, Fashion MNIST, CIFAR-10, and TF-FLOWERS, as well as in combination with a transformer architecture (BERT) on IMDB, achieving high classification accuracy.

This research explores how higher-order interactions in complex systems influence the dynamics of topological signals, revealing new insights into the interplay between topology and dynamics.

This research introduces Global Topological Dirac Synchronization, a state where oscillators associated with simplices and cells of arbitrary dimension, coupled by the Topological Dirac operator, operate in unison. The study combines algebraic topology, non-linear dynamics, and machine learning to derive the conditions for the existence and stability of this synchronization state.

This research explores Turing patterns on discrete topologies, extending the classical theory of pattern formation to networks and higher-order structures. The study highlights the potential of this approach to transcend the conventional boundaries of PDE-based methods, offering insights into self-organization phenomena across various disciplines.

This research explores the global synchronization of topological signals on higher-order networks, revealing that topological constraints impact synchronization differently across various network structures.

This study introduces a neural network-based method for nonparametric analysis of the Hubble diagram, extended to high redshifts. Validated using simulated data, the method aligns with a flat Λ (Lambda) cold dark matter model (ΩM ≈ 0.3) up to z ≈ 1-1.5, but deviates at higher redshifts. It also suggests increasing ΩM values with redshift, indicating potential dark energy evolution.

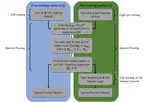

The Recurrent Spectral Network (RSN) is a new automated classification method that uses dynamical systems to direct data to specific targets, demonstrating effectiveness with both a simple model and a standard image processing dataset.

This research examines reaction-diffusion processes on networks, particularly focusing on topological signals across nodes, links, and cells. It uses the Dirac operator to study interactions and reveals conditions for Turing pattern emergence, validating the findings on network models and square lattices.

Training neural networks in spectral space focuses on optimizing eigenvalues and eigenvectors instead of individual weights, allowing effective implicit bias that node enables pruning without sacrificing performance.

We introduce a new method for training deep neural networks by focusing on the spectral space, rather than the traditional node space. It involves adjusting the eigenvalues and eigenvectors of transfer operators, offering improved performance over standard methods with an equivalent number of parameters.