Article-Conference

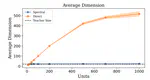

This study explores the teacher-student paradigm in machine learning, focusing on overparameterized student networks trained by fixed teacher networks. It introduces a new optimization scheme using spectral representation of linear information transfer between layers. This approach allows identifying a stable student substructure that mirrors the teacher’s complexity. The method shows that pruning unimportant nodes, based on optimized eigenvalues, does not degrade performance, indicating a second-order phase transition with universality traits in neural network training.