Deep Learning

Explainability and Model Development

My research in deep learning started addressing a seemingly simple yet profound question: Can a neural network be schematized as a graph, and how its functionality relate with the spectral properties of such graph? Remarkably, following this line, it becomes possible to rank neurons by relevance for Structural Pruning, and reformulating the learning process through dynamical systems to build more adaptive recurrent architectures. More recently, my focus has shifted towards Graph Neural Networks (GNNs) in the context of molecular physics, a field where mathematical and physical intution can mutually reinforce each other. This includes developing techniques to approximate the behavior of Message Passing Neural Networks (MPNNs) used in molecular potentials, as well as designing architectures tailored to the force matching problem — a central challenge in learning accurate and transferable interatomic force fields from highly accuratly simulated data.I also have some applied works and more theoretical ones going on, follow me for new papers on the subject!

Related Publications

.js-id-deep-learning

This paper extend tools recently proposed in the nascent field of explainable artificial intelligence, such as Layerwise Relevance Propagation, to coarse-grained potentials based on graph neural networks.

This article explores the comparison between deterministic and stochastic dynamical classifiers in the context of opposing random adversarial attacks using noise. The study provides insights into how these different types of classifiers can be used to mitigate adversarial threats.

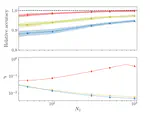

Finite-width one hidden layer networks display nontrivial output-output correlations that vanish in the lazy-training infinite-width limit. This manuscript rationalizes this evidence using kernel shape renormalization in the proportional limit of Bayesian deep learning.

This research introduces a learning algorithm based on the Wilson-Cowan model for metapopulation, a neural mass network model that treats different subcortical regions of the brain as connected nodes. The model incorporates stable attractors into its dynamics, enabling it to solve various classification tasks. The algorithm is tested on datasets such as MNIST, Fashion MNIST, CIFAR-10, and TF-FLOWERS, as well as in combination with a transformer architecture (BERT) on IMDB, achieving high classification accuracy.

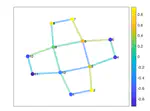

This research explores how higher-order interactions in complex systems influence the dynamics of topological signals, revealing new insights into the interplay between topology and dynamics.

This research introduces Global Topological Dirac Synchronization, a state where oscillators associated with simplices and cells of arbitrary dimension, coupled by the Topological Dirac operator, operate in unison. The study combines algebraic topology, non-linear dynamics, and machine learning to derive the conditions for the existence and stability of this synchronization state.

This research explores Turing patterns on discrete topologies, extending the classical theory of pattern formation to networks and higher-order structures. The study highlights the potential of this approach to transcend the conventional boundaries of PDE-based methods, offering insights into self-organization phenomena across various disciplines.

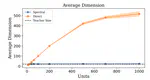

This study explores the teacher-student paradigm in machine learning, focusing on overparameterized student networks trained by fixed teacher networks. It introduces a new optimization scheme using spectral representation of linear information transfer between layers. This approach allows identifying a stable student substructure that mirrors the teacher’s complexity. The method shows that pruning unimportant nodes, based on optimized eigenvalues, does not degrade performance, indicating a second-order phase transition with universality traits in neural network training.

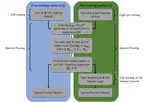

EODECAs, merging machine learning with dynamical systems, enhance interpretability and transparency in neural networks. They employ continuous ordinary differential equations, offering both high classification accuracy and an understanding of data processes, addressing the opacity of traditional deep learning models. This approach signifies a step towards more comprehensible machine learning models.

The Complex Recurrent Spectral Network (C-RSN) is a novel AI model that more accurately mimics biological neural processes using localized non-linearity, complex eigenvalues, and separated memory/input functionalities. It demonstrates dynamic, oscillatory behavior akin to biological cognition and effectively classifies data, as shown in tests with the MNIST dataset.

This research explores the global synchronization of topological signals on higher-order networks, revealing that topological constraints impact synchronization differently across various network structures.

This study introduces a neural network-based method for nonparametric analysis of the Hubble diagram, extended to high redshifts. Validated using simulated data, the method aligns with a flat Λ (Lambda) cold dark matter model (ΩM ≈ 0.3) up to z ≈ 1-1.5, but deviates at higher redshifts. It also suggests increasing ΩM values with redshift, indicating potential dark energy evolution.

The Recurrent Spectral Network (RSN) is a new automated classification method that uses dynamical systems to direct data to specific targets, demonstrating effectiveness with both a simple model and a standard image processing dataset.

This research examines reaction-diffusion processes on networks, particularly focusing on topological signals across nodes, links, and cells. It uses the Dirac operator to study interactions and reveals conditions for Turing pattern emergence, validating the findings on network models and square lattices.

Training neural networks in spectral space focuses on optimizing eigenvalues and eigenvectors instead of individual weights, allowing effective implicit bias that node enables pruning without sacrificing performance.

We introduce a new method for training deep neural networks by focusing on the spectral space, rather than the traditional node space. It involves adjusting the eigenvalues and eigenvectors of transfer operators, offering improved performance over standard methods with an equivalent number of parameters.

This paper analyzes the effectiveness of containment measures for SARS-CoV-2, using mobility data to gauge their impact. A deep learning model predicts virus spread scenarios in Italy, showing how these measures help flatten the infection curve and estimating the time required for their noticeable effects.

This study presents a variant of spectral learning for deep neural networks, where adjusting two sets of eigenvalues for each layer mapping significantly enhances network performance with fewer trainable parameters. This method, inspired by homeostatic plasticity, offers a computationally efficient alternative to conventional training, achieving comparable results with a simpler parameter setup. It also enables the creation of sparser networks with impressive classification abilities.